The transition from manual, craft-based phishing to industrial-scale AI-driven operations has effectively dismantled the traditional barriers that once protected corporate networks. Only a short time ago, a phishing campaign required significant human effort to draft convincing copy, research targets, and manage infrastructure, but today’s generative models have compressed these timelines into seconds. This shift is not merely an incremental improvement in criminal efficiency; it represents a fundamental change in how social engineering is executed, with automated systems now capable of producing high-fidelity lures that lack the tell-tale signs of traditional fraud. As the volume of these attacks continues to swell, the cybersecurity industry is forced to reckon with a reality where the sheer velocity of machine-generated threats can overwhelm manual defense strategies. This evolution marks the end of the “low-effort” scam era, ushering in a period where every employee is a potential high-value target for a precision-engineered digital assault. Data indicates that AI-generated activity recently surged 14-fold, leaping from a marginal share to over half of all detected threats.

The Evolution of Sophisticated Attack Vectors

Diversification: Moving Beyond the Standard Inbox

As standard email security protocols have become more sophisticated, threat actors have moved their focus toward less-guarded digital channels. One of the most insidious methods currently circulating involves the exploitation of calendar invitations through malicious .ics files, which boast a success rate significantly higher than traditional email-based lures. These attacks are particularly effective because they place a malicious link directly into an employee’s schedule, where it functions as a digital landmine that persists even if the initial notification is deleted. This bypasses many traditional gateway filters that prioritize scanning email body text rather than calendar attachments. Because users typically trust their own schedules, the psychological barrier to clicking is much lower. Attackers are successfully weaponizing the inherent trust of corporate productivity tools, ensuring that their malicious payloads remain visible and accessible throughout the workday, thereby increasing the probability of a successful breach through simple repetition and familiarity.

Precision: Targeted Recruitment and Social Reconnaissance

Modern threat actors are increasingly using generative models to perform deep reconnaissance, synthesizing data from professional networks like LinkedIn to create highly personalized lures. By targeting specific functional teams, such as sales or marketing, with fraudulent job opportunities or partnership inquiries, attackers can bypass general skepticism. These recruitment-based scams impersonate reputable global brands with flawless linguistic accuracy, making them nearly impossible to distinguish from legitimate professional outreach. The goal often extends beyond simple credential harvesting; many of these campaigns seek to hijack corporate social media or advertising accounts to facilitate further fraud. By leveraging large language models to analyze corporate filings and social media footprints, attackers can tailor their messaging to match the specific tone and vocabulary of a target industry. This level of precision ensures that phishing is no longer a game of chance but a highly calculated strategic operation that exploits the very professional connections upon which modern businesses rely.

Technical Evasion and the Failure of Traditional Filters

Overcoming Signatures: The End of Predictable Patterns

The technical architecture of modern phishing campaigns has evolved to specifically circumvent signature-based detection and traditional natural language processing filters. In the past, security systems relied on identifying “trigger words” or recurring structural patterns common in fraudulent emails, but AI allows scammers to vary phrasing and vocabulary infinitely. This means that no two malicious messages are identical, effectively rendering static blocklists and signature databases obsolete. By instructing an AI model to adopt different personas or structural styles, threat actors ensure that their content remains unique and appears organic to automated scanners. This fluid approach to content generation allows malicious lures to slip through enterprise-grade defenses that were designed to catch the repetitive, poorly written scams of previous years. The result is a landscape where the primary defense mechanisms of the last decade are struggling to keep pace with the polymorphic nature of machine-generated content, forcing a radical rethink of how organizations define and detect malicious intent.

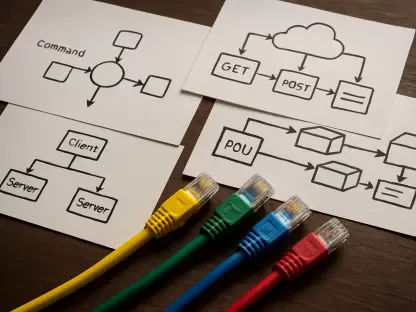

Redirection: Exploiting Payloads and Callback Tactics

While malicious links remain the most frequent delivery mechanism for cyber threats, the methods used to disguise these URLs have become remarkably complex. Attackers frequently utilize open redirects on trusted, high-reputation websites to bounce users toward malicious destinations, a tactic that often confuses automated URL scanners. Furthermore, there is a growing trend toward “callback phishing,” a hybrid approach that combines digital messages with voice-based social engineering. In these scenarios, an AI-crafted email might prompt a user to call a specific phone number to resolve an urgent issue, at which point the attacker uses human or AI-generated voice interaction to extract sensitive information. This multi-modal strategy complicates the defensive posture of an organization, as it moves the attack from the digital realm into direct human communication. By blending these redirection tactics with contextually relevant narratives, such as urgent invoice discrepancies or security alerts, attackers create a sense of pressure that pushes employees to bypass their own security training in favor of immediate resolution.

Strategic Defensive Shifts in the Age of AI

Behavioral Science: Strengthening the Human Frontline

Because contemporary phishing attacks are designed to exploit human psychology rather than technical vulnerabilities, the most effective defensive strategies have shifted toward behavioral science. Organizations are moving away from the “check-the-box” compliance training of the past in favor of adaptive, real-world simulations that evolve based on an individual employee’s risk profile. Data shows that companies implementing these behavior-focused programs have seen a dramatic reduction in successful phishing clicks and a six-fold increase in proactive threat reporting. This approach focuses on building a “security culture” where employees are conditioned to recognize the subtle psychological triggers used by AI, such as manufactured urgency or appeals to authority. By training the workforce to be an active part of the detection network, businesses can create a resilient human firewall that supplements technical controls. This shift acknowledges that while AI can generate the lure, it still requires a human action to complete the attack, making behavioral modification a critical pillar of any modern cybersecurity framework.

Autonomous Defense: The Reality of Bot-on-Bot Conflict

The shrinking window between an initial network breach and lateral movement has necessitated the deployment of autonomous defensive systems that operate at the same speed as the attackers. In this “bot-on-bot” environment, security teams are utilizing AI-driven defenders to deploy adaptive honeypots and deceptive digital tokens across the network. These systems are designed to misdirect malicious AI models, leading them into isolated environments where their tactics can be studied without risking actual corporate data. Because human defenders cannot react within the milliseconds required to stop a machine-led intrusion, these autonomous agents are becoming essential for maintaining network integrity. They provide real-time responses to anomalies that would otherwise go unnoticed by traditional monitoring tools. This evolution in defense represents a move toward proactive deception, where the goal is not just to block the attacker but to actively confuse and delay them. By automating the response layer, organizations can buy back the time needed for human analysts to perform deeper forensic investigations and strategic remediation.

Addressing Internal Risks and Agentic Autonomy

Agentic Risk: Managing Internal AI Adoption

As organizations rapidly integrate autonomous AI agents into their internal workflows, the traditional boundaries of trust and identity within the enterprise have become increasingly blurred. These “agentic” systems, capable of performing complex tasks and interacting with sensitive APIs, present a new frontier for exploitation through prompt injection or data leakage. Without proper visibility into how these internal tools are used, companies risk creating inadvertent backdoors that attackers can manipulate. Security leaders are now tasked with implementing strict monitoring protocols and cryptographic identity attestation for every AI agent operating within their environment. This ensures that every automated action is verified and tied to a specific, authorized policy, preventing unauthorized agents from escalating privileges or accessing restricted datasets. The challenge lies in balancing the productivity gains of AI automation with the need for rigorous oversight, requiring a shift in identity and access management that accounts for non-human actors as primary participants in the corporate ecosystem.

Resilience: Building Multi-Layered Infrastructure

To maintain a secure posture in a landscape dominated by automated threats, organizations implemented multi-layered strategies that prioritized telemetry and strict governance over all AI interactions. It became clear that technical controls alone were insufficient, leading to the adoption of comprehensive policies regarding what data could be fed into large language models and how third-party AI tools were vetted. By focusing on deep visibility across the entire network, security teams identified subtle machine-speed anomalies that signaled an AI-driven intrusion before it could escalate. The final consensus among industry leaders was that building a resilient infrastructure required a fundamental integration of human intelligence and automated defense. This approach allowed businesses to constrain agent autonomy while ensuring that every digital action was backed by a verifiable security policy. Ultimately, the successful organizations were those that treated cybersecurity not as a static problem to be solved, but as a dynamic process of continuous adaptation and rigorous verification, ensuring they remained as agile as the adversaries they faced.