A silent revolution is currently reshaping the global industrial landscape as manufacturing facilities transition from antiquated reactive maintenance models to sophisticated, data-driven intelligence systems powered by deep learning. This shift represents much more than a simple technological upgrade; it is a fundamental reimagining of how production environments manage reliability and operational efficiency. In the high-stakes world of modern production, the traditional approach of fixing a machine only after it has failed is no longer a viable strategy for companies aiming to remain competitive. Instead, the integration of artificial intelligence into the factory floor allows for the anticipation of equipment failure with unprecedented precision. By analyzing vast streams of information from interconnected sensors, deep learning models can identify microscopic signs of wear and tear that were previously invisible to human operators or basic monitoring software. This proactive stance is essential in industries such as semiconductor fabrication or automotive assembly, where even a few minutes of unplanned downtime can result in millions of dollars in lost revenue and severe disruptions to tightly synchronized global supply chains.

The emergence of Smart Manufacturing 4.0 has turned the factory floor into a complex digital ecosystem where every motor, pump, and robotic arm serves as a data point. While previous iterations of industrial automation focused on basic connectivity, the current era leverages deep learning to move beyond simple data collection toward actionable intelligence. Traditional scheduled maintenance often led to unnecessary expenses by replacing parts that were still functional or, worse, failing to catch a breakdown before it occurred. Deep learning effectively bridges this gap by providing a “horizon-based” view of asset health, allowing maintenance teams to visualize the future state of their equipment. As global markets face increasing volatility, the ability to maintain a resilient and predictable production line has moved from being a technical support function to a core strategic capability. By stabilizing quality and ensuring maximum uptime, manufacturers are now using these advanced algorithms to build a foundation for long-term growth and operational harmony across their entire enterprise.

The Evolution from Rule-Based Logic to Deep Learning

For many decades, the standard for predictive maintenance was rooted in rule-based logic where simple sensors were programmed to trigger alarms only when a pre-defined threshold was crossed. These systems relied on basic parameters, such as a bearing reaching a specific temperature or a motor exceeding a certain vibration frequency, to alert a technician. While these methods provided a foundational safety net, they were inherently limited because they could not account for the complex, non-linear behaviors of modern machinery operating under fluctuating loads and varying environmental conditions. A machine might operate within “normal” bounds but still be on the verge of a catastrophic failure due to a combination of subtle factors that a rule-based system simply cannot correlate. Deep learning represents a radical departure from these manual, expert-encoded assumptions by allowing the system to learn the intricate relationships between multiple data streams without needing human intervention to define the rules.

This fundamental shift in technological approach allows modern maintenance teams to move away from binary “pass or fail” diagnostics and toward probabilistic estimates of an asset’s remaining useful life. Deep learning models are capable of extracting intelligence directly from massive quantities of historical and real-time data, identifying subtle degradation patterns that might appear weeks or even months before a traditional alarm would trip. By analyzing the nuanced relationship between disparate signals—such as concurrent changes in electrical current, acoustic emissions, and lubricant pressure—these models provide a comprehensive understanding of equipment health that was previously unattainable. Consequently, decision-makers are now able to prioritize maintenance interventions based on actual operational risk rather than arbitrary calendar dates. This ensures that resources are allocated where they are most needed, aligning maintenance activities with broader production goals and significantly reducing the waste associated with over-servicing perfectly functional equipment.

Core Architectures Driving Industrial Intelligence

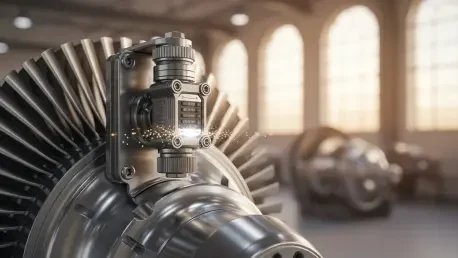

The practical application of deep learning in a smart factory depends heavily on specific neural network architectures that are uniquely suited for industrial data processing. Convolutional Neural Networks (CNNs), which were originally developed for image recognition and computer vision, have found a vital new role in analyzing vibration spectrograms and thermal imaging data. In these applications, the CNN treats the signal as a visual pattern, allowing it to detect minute mechanical imbalances or surface-level degradations that would be impossible for a human eye to spot. For instance, a CNN can identify a specific frequency signature in a vibration sensor that indicates a hairline crack in a turbine blade long before it becomes a structural threat. This visual approach to signal processing enables the system to maintain extreme precision in quality control and equipment monitoring, ensuring that every component in a production line is performing at its peak theoretical efficiency.

In addition to visual signal analysis, Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) networks have become indispensable for handling the continuous streams of time-series data generated by industrial assets. Unlike standard neural networks, LSTMs are specifically designed to remember information over long periods, making them exceptionally effective at tracking the gradual accumulation of fatigue in critical components like pumps, actuators, and robotic joints. Furthermore, autoencoders are increasingly used for sophisticated anomaly detection by establishing a baseline for “normal” machine behavior during standard operations. When a machine begins to deviate from this learned baseline—even in a way that does not yet violate safety limits—the autoencoder flags the behavior as a potential fault. This capability is particularly valuable for newer facilities where historical data on specific failure modes might be scarce, as the system does not need to know what a “breakdown” looks like to recognize that something is not right.

The Role of Sensing and Edge Computing

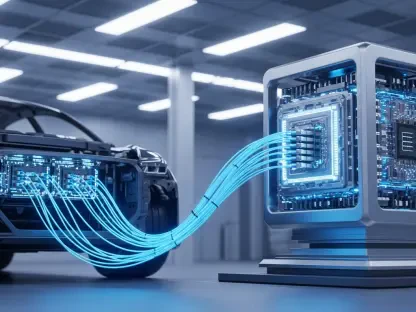

The effectiveness of any deep learning model is ultimately governed by the fidelity of the data it receives, which has placed high-precision sensing at the center of the industrial revolution. Modern factories are now equipped with an array of advanced Micro-Electro-Mechanical Systems (MEMS) vibration sensors, pressure transducers, and current-monitoring integrated circuits that form the “data backbone” of the facility. These sensors capture high-frequency signals that provide the raw material for AI diagnostics, but the sheer volume of this data presents a significant logistical challenge. In high-speed production environments, sending every terabyte of raw sensor data to a centralized cloud server for processing is often impractical due to bandwidth constraints and unacceptable latency. This has led to the rapid adoption of “Edge AI,” where data is processed locally on industrial gateways and AI-capable processors located directly on the factory floor.

By moving the intelligence closer to the source of the data, manufacturers can achieve near-instantaneous diagnostics that are critical for high-stakes operations. For example, in a robotic assembly line or a high-pressure power generation facility, a delay of even a few hundred milliseconds in detecting a fault could lead to severe equipment damage or safety hazards. Edge computing ensures that critical alerts are generated and acted upon in real-time, regardless of the factory’s external internet connectivity. Moreover, this localized approach significantly reduces the costs associated with data transmission and long-term storage, as only the most relevant insights and anomalies are sent to the cloud for further analysis. This hardware-software synergy allows for a scalable and resilient infrastructure where individual machines can monitor themselves, contributing to a more robust and self-healing production environment that is less dependent on centralized control.

Sector Adoption and the Path to Scalability

Deep learning has achieved a remarkable level of maturity, leading to its successful deployment across a wide range of demanding industrial sectors. In the aerospace industry, major engine manufacturers utilize advanced analytics to monitor the health of propulsion systems across global fleets, ensuring that maintenance is performed precisely when needed to maximize aircraft availability. Similarly, in the energy and utilities sector, deep learning models are deployed to monitor the insulation health of large-scale transformers, allowing operators to detect signs of aging or mechanical stress before they lead to grid-destabilizing outages. The semiconductor industry has also embraced AI-based diagnostics to monitor the extreme temperature and vibration stability required for chip fabrication, where even a microscopic deviation can ruin an entire batch of silicon wafers. These real-world applications demonstrate that predictive maintenance is no longer an experimental concept but an essential pillar of modern asset management.

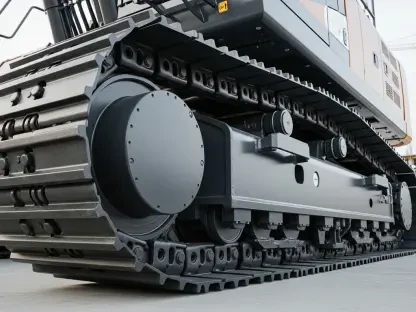

Despite these clear successes, the path to enterprise-wide scalability still involves overcoming significant engineering and cultural hurdles. One of the primary challenges is the integration of legacy machinery, as many plants operate with a mix of decades-old equipment and cutting-edge robotics. Retrofitting these older assets with modern sensors and integrating their data into a unified platform requires a meticulous approach to systems engineering. Furthermore, establishing trust among experienced maintenance personnel is a critical factor in the success of these programs. This has led to the rise of “Explainable AI” (XAI), which aims to provide the underlying rationale for a model’s recommendation rather than delivering a “black box” alert. By showing the specific data points that led to a failure prediction, XAI allows human engineers to validate the findings and make more informed decisions. As these challenges are addressed, the focus is shifting toward creating a unified ecosystem where artificial intelligence and human expertise work together to eliminate the threat of unplanned downtime.

Strategic Next Steps: Integrating Intelligence and Human Expertise

The successful integration of deep learning into the manufacturing environment was achieved through a dedicated focus on data quality, sensor accuracy, and algorithmic transparency. Manufacturers discovered that the most effective implementations were those that did not seek to replace human workers but rather aimed to augment their specialized knowledge with predictive insights. This transition required a significant shift in corporate culture, moving away from “firefighting” reactive mentalities toward a disciplined, data-driven strategy. Looking forward, the most successful organizations will be those that continue to invest in the training of their workforce, ensuring that technicians and engineers are equipped to interpret and act upon the sophisticated outputs of deep learning models. By prioritizing the development of hybrid teams where AI handles the massive scale of continuous monitoring and humans handle complex problem-solving, factories can achieve a level of operational harmony that was previously impossible.

To ensure long-term success, industrial leaders must now focus on the formalization of data governance and the expansion of “Federated Learning” models. This approach allows different facilities within a company, or even different organizations within a supply chain, to collaboratively improve their AI models without compromising sensitive proprietary data. Additionally, the move toward Digital Twins—virtual replicas of physical assets fed by live data—provides a powerful sandbox for simulating various maintenance scenarios and training models without risking actual production. The final step in this evolution involves the seamless orchestration of maintenance tasks, where a failure prediction automatically triggers spare-parts procurement and schedules the necessary technician based on real-time availability. By taking these actionable steps, manufacturers can solidify the reliability of their operations, reduce environmental impact through optimized energy usage, and maintain a decisive competitive edge in an increasingly automated global market.