The difference between a market-leading manufacturer and a struggling enterprise often hinges on how they manage the thousands of invisible data points that define a single product. Product Lifecycle Management (PLM) has transcended its origins as a mere digital filing cabinet for CAD drawings to become the definitive cognitive backbone of the modern industrial sector. This review evaluates the evolution of PLM technology, its current performance metrics, and its capacity to act as a “single source of truth” in an increasingly fragmented global supply chain. The primary objective is to determine how these systems mitigate systemic friction and whether the current technological shift toward digital continuity is sufficient to meet the aggressive time-to-market demands of the next few years.

The Foundation of Product Lifecycle Management

Modern Product Lifecycle Management functions as a strategic configuration of processes designed to govern a product’s journey from its initial conceptual spark through design, manufacturing, and eventually to service and disposal. At its core, the technology seeks to unify people, data, and business systems into a cohesive vision. By establishing a centralized repository, it ensures that every stakeholder—regardless of geographical location—is working from the same validated information. This centralization is what industry experts call the “single source of truth,” a concept that prevents the catastrophic errors associated with using outdated or conflicting design revisions.

The evolution of this framework represents a significant departure from the siloed engineering practices of the past. Initially, PLM was a reactive tool used primarily for version control. However, it has developed into a proactive digital backbone that facilitates global collaboration. This shift is critical because today’s products are no longer isolated mechanical entities; they are complex systems of hardware, software, and electronic components. Without a robust PLM framework, managing the cross-disciplinary dependencies of such products would be nearly impossible, leading to a complete breakdown in data integrity across complex supply chains.

Essential Components for Seamless Product Development

Requirement Management and Living Assets

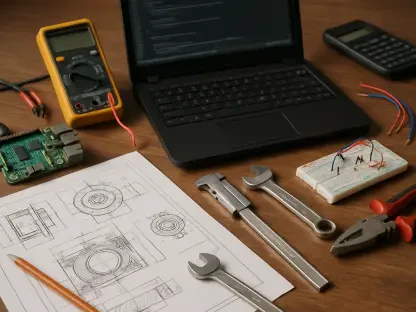

Capturing and maintaining the original intent of a product is perhaps the most difficult aspect of engineering, yet PLM handles this by transforming static requirements into dynamic, shareable assets. Traditionally, requirements were buried in disconnected documents, leading to ambiguity that only surfaced during the expensive quality assurance phase. By making these requirements “living assets” within the system, every department from purchasing to quality can see exactly what the product is supposed to achieve. This transparency is the primary reason organizations can reduce scrap rates, as it ensures that the physical build never deviates from the verified digital requirement.

Component Traceability and Design Reuse

One of the most pervasive drains on engineering productivity is the “reinvention trap,” where designers recreate existing parts simply because they cannot find them in the company’s internal catalog. Modern PLM systems solve this through robust searchability and deep visibility into legacy design files. By making internal catalogs easy to navigate, the technology prevents the unnecessary proliferation of duplicate part numbers. This does not just save design hours—which can be reduced by up to 30%—but also significantly strengthens corporate purchasing power. When a company can prove it uses the same component across multiple product lines, it gains the leverage needed to negotiate better volume discounts from suppliers.

Integrated Cross-Functional Collaboration

True collaboration requires more than just frequent meetings; it requires a structured digital workflow that involves manufacturing and procurement early in the design phase. PLM technology facilitates this by allowing downstream teams to provide feedback while a design is still fluid. This early intervention ensures that manufacturability is “baked into” the product from the start, preventing the expensive redesign loops that occur when a design is deemed impossible to produce only after it reaches the shop floor. This shift from a sequential “over-the-wall” approach to a concurrent engineering model is what allows firms to hit aggressive launch targets with high confidence.

Emerging Trends in Digital Continuity

The technological frontier is currently moving toward “digital thread” connectivity, a concept that seeks to eliminate manual data transfers and paper-based processes entirely. In this model, data flows automatically and in real-time between disparate systems, ensuring that a change in the engineering department is instantly reflected in the manufacturing and service modules. This level of connectivity is unique because it removes the human-error element that traditionally plagues data migration. Furthermore, we are seeing the integration of AI-driven analytics that can scan these digital threads to predict potential bottlenecks before they manifest in the physical world, allowing managers to optimize change management cycles proactively.

Moreover, the decentralization of data access is becoming a standard feature rather than an optional luxury. While older systems were often criticized for being “engineering-centric,” newer implementations prioritize user experiences that cater to non-technical stakeholders. This democratization of data ensures that a procurement officer or a marketing lead can extract necessary product insights without needing a degree in mechanical engineering. By lowering the barrier to entry, these systems foster a more inclusive corporate culture where every department feels a shared responsibility for the product’s ultimate success in the market.

Real-World Applications and Industrial Impact

In high-complexity sectors like aerospace and medical device manufacturing, PLM is not just a productivity tool but a regulatory necessity. These industries require a level of precision and traceability that manual systems simply cannot provide. For instance, in the event of a component failure, a well-implemented PLM system allows an organization to trace that specific part back to its original design, the specific batch of raw materials used, and the quality checks it passed. This granularity is what allows global organizations to synchronize multi-site engineering teams and ensure that suppliers are always working from the most recent, validated revisions, thereby minimizing the risk of expensive product recalls.

Overcoming Systemic Obstacles and Implementation Challenges

Despite the clear advantages, significant hurdles remain, most notably the “executive blind spot.” Many leaders still view PLM as a localized engineering expense rather than a comprehensive business strategy. This often leads to a misalignment of departmental Key Performance Indicators (KPIs), where engineering might be incentivized for speed while manufacturing is measured on unit cost, creating a natural friction that the software alone cannot fix. Additionally, the technical challenge of migrating decades of legacy data into a modern environment remains a daunting task for many legacy firms. Ongoing development is focusing on specialized migration tools and standardized protocols to make this transition less painful and more automated.

The Future of Integrated Lifecycle Systems

Looking forward, the technology is moving toward a more autonomous state where systems will leverage machine learning to suggest design improvements based on historical manufacturing data. Imagine a PLM system that alerts an engineer that a specific material choice has historically led to a 15% failure rate in the assembly line. This shift from a descriptive tool to a prescriptive partner will likely redefine the role of the engineer. Long-term, the widespread adoption of these comprehensive frameworks will transform product development from a series of reactive, “firefighting” incidents into a predictable, friction-free flow that defines a company’s competitive advantage in a crowded marketplace.

Summary and Final Assessment

The analysis of the current product development landscape demonstrated that delays were rarely the result of individual incompetence but were instead the direct consequence of embedded process friction. It was found that organizations which transitioned to a unified digital backbone significantly outperformed their peers by eliminating redundant work and synchronizing cross-functional teams earlier in the development cycle. The evidence suggested that “digital continuity” is no longer a luxury but a fundamental requirement for any company managing complex, multi-disciplinary products. Successful implementations proved that when data is treated as a transparent, living asset, the entire organization moves with greater agility and precision.

Moving forward, the focus must shift from merely implementing the software to fostering a culture of lifecycle-oriented thinking. Companies should prioritize the alignment of departmental incentives to ensure that the “single source of truth” is supported by collective accountability. Future development will likely center on making these systems even more predictive, using historical data to automate routine compliance and design checks. For organizations aiming to maintain a competitive edge, the path forward involves dismantling the remaining silos and fully embracing a digital thread that connects the initial concept to the end-user experience. This transition will ultimately turn operational efficiency into a sustainable engine for continuous innovation.