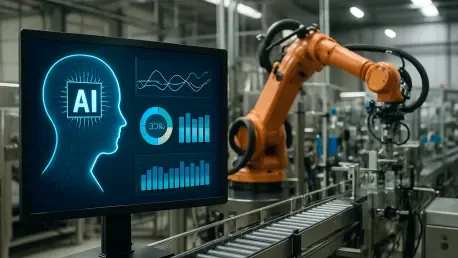

With over a decade of experience in manufacturing systems and electronic infrastructure, Kwame Zaire has established himself as a leading voice in the intersection of production management and emerging technologies. His work focuses on the delicate balance between operational efficiency—driven by predictive maintenance and automated logistics—and the growing necessity for robust industrial safety. As AI transitions from an experimental layer to the central operating system of global commerce, Kwame provides critical insights into the hidden vulnerabilities that threaten to disrupt the modern supply chain.

The following discussion explores the evolving landscape of industrial cybersecurity, specifically focusing on the risks of AI-powered hacking, the dangers of poisoned training data, and the urgent need to treat AI infrastructure with the same rigor as traditional operational technology.

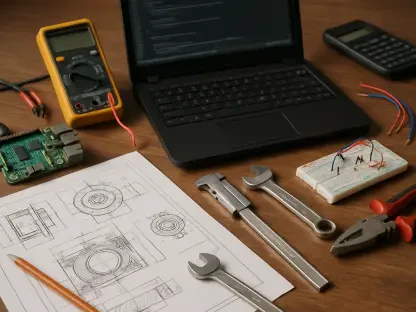

Malicious code can be hidden in AI models, allowing them to infiltrate a company’s data pipeline quietly. What specific vetting protocols should be implemented when sourcing models from open repositories, and how can organizations verify that a model’s internal logic remains uncompromised after deployment?

When sourcing from open repositories like Hugging Face, organizations must treat every model as “untrusted” by default. We have seen instances where malicious code was executed the moment a company loaded an environment, proving that sandboxing is no longer optional. Vetting protocols should include static and dynamic analysis of the model’s architecture, combined with a “clean room” testing phase where the model’s outputs are compared against a verified baseline using known datasets. To verify internal logic after deployment, companies need to implement continuous monitoring of decision-making patterns, looking for the “silent failures” that occur when a model begins to drift or act on hidden triggers. It is about creating a cryptographic chain of custody for the model, ensuring that the logic you approved on day one is exactly what is running on day one hundred.

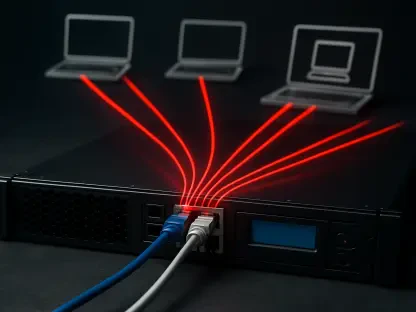

Attackers are increasingly using AI to automate nearly 90% of their hacking campaigns. Given this extreme speed, what specific defensive technologies are required to stay ahead, and could you walk us through a step-by-step response plan for a high-velocity, AI-driven breach?

To counter an attack where 80 to 90 percent of the process is automated, manual human intervention is simply too slow to prevent disaster. Defensive posture must shift toward autonomous response systems that can isolate compromised network segments within milliseconds of detecting anomalous behavior. A high-velocity response plan starts with immediate AI-driven isolation of the affected data pipeline to stop the spread, followed by a transition to “manual override” for critical industrial controls. Once the perimeter is stabilized, the team must conduct a forensic sweep of the AI decision logs to identify if the breach was a data theft or a logic manipulation. Finally, the system should be restored from a “gold standard” offline backup that has been verified as untainted by the AI’s autonomous learning cycles.

Poisoned training data can lead to subtle manipulations in demand forecasting or routing algorithms without any physical breach. How can logistics teams differentiate between a standard market fluctuation and a deliberate manipulation of an AI’s decision-making process, and what data-integrity metrics are most critical?

Differentiating between a genuine market shift and a poisoned dataset requires looking for “statistical impossibility” in the input signals. Standard market fluctuations usually follow historical trends or external economic markers, whereas data poisoning often manifests as a hyper-specific anomaly designed to trigger a specific response, such as rerouting a shipment to a vulnerable location. The most critical metrics for logistics teams are data lineage and input variance scores, which flag when a source begins providing data that deviates from the consensus of other sensors or vendors. By maintaining a high “integrity score” for every data point, teams can spot when an attacker is trying to quietly nudge a procurement engine toward a false demand spike. We must stop trusting the output implicitly and start questioning the “diet” of data we are feeding these systems.

Many industrial systems are now “insecure by default” because leadership often places too much trust in probabilistic AI outputs. What are the practical steps for transitioning AI from an experimental tool to a hardened operational technology, and how should red-team testing be integrated into this process?

The transition begins by stripping away the “magic” of AI and treating it like any other piece of heavy machinery on the factory floor, subject to the same safety and reliability standards. Practical hardening involves moving models out of the cloud and onto edge devices where possible, reducing the external attack surface and ensuring the system can function during a network outage. Red-team testing should be integrated not just as a one-time audit, but as a continuous “adversarial” training process where security experts actively try to trick the model into making unsafe decisions. This creates a feedback loop where the AI learns to recognize and resist manipulation attempts, turning a probabilistic weakness into a deterministic strength. Leaders need to realize that an AI system that works 99% of the time is a 1% liability that could shut down an entire processing plant.

A single vulnerability in a shared AI platform can cause cascading failures across thousands of downstream partners. What strategies can companies use to map their third-party AI dependencies, and what specific “circuit breakers” should be built into supply chain software to prevent system-wide contagion?

Mapping dependencies requires a comprehensive “AI Bill of Materials” that lists every model, API, and third-party data source used within the organization’s ecosystem. Companies need to realize that they are part of a digital web; just as the 2021 ransomware attack on JBS meat processing facilities disrupted global distribution, a failure in a shared AI forecasting tool can paralyze an entire sector. “Circuit breakers” should be built as hard-coded logic gates that prevent the AI from making autonomous decisions above a certain financial or operational threshold without human sign-off. If the AI suddenly attempts to order ten times the usual amount of raw materials or changes a shipping route to a high-risk zone, the system must automatically lock down and alert the security operations center. This prevents a single compromised node from triggering a domino effect across the entire supply chain.

What is your forecast for the security of AI-driven industrial supply chains?

I believe we are entering a period of “forced maturity” where the industry will suffer several high-profile, AI-driven outages before security truly catches up to innovation. Right now, the answer to whether our systems are secure enough is a definitive “not yet,” but the move toward decentralized AI and rigorous data governance is promising. We will see a shift where companies no longer compete just on the intelligence of their algorithms, but on the resilience and auditability of their entire digital infrastructure. My forecast is that the most successful industrial players five years from now will be those who treated AI security as a core engineering discipline rather than a secondary IT concern. The scale of the opportunity is massive, but only for those who build their “smart” factories on a foundation of “hard” security.