The rapid proliferation of artificial intelligence has transitioned from a localized software trend into a physical infrastructure crisis that is currently testing the structural integrity of the global electrical grid. Unlike the steady, predictable growth of the internet in previous decades, the AI explosion requires a massive, immediate infusion of power that our current utility systems were never designed to handle. This shift represents more than just a demand for more electricity; it is a fundamental transformation of how energy is consumed, distributed, and prioritized across the modern landscape. High-performance computing (HPC) now dictates the pace of industrial development, forcing a departure from traditional infrastructure management toward a more integrated, high-density approach.

The Evolution of AI-Driven Power Demand

The emergence of generative AI and large language models has fundamentally altered the trajectory of data center energy requirements. In the past, data centers were primarily repositories for information, serving as digital warehouses where power consumption ebbed and flowed with user traffic. However, the current era of machine learning necessitates a shift toward “compute-heavy” environments where the core principle is no longer just storage, but the active processing of astronomical datasets. This evolution has moved the sector from a peripheral utility consumer to a primary driver of national energy policy.

As high-performance computing becomes the backbone of the digital economy, the context of its deployment has changed. We are no longer looking at isolated server farms in rural areas but at massive integrated clusters that require direct access to high-voltage transmission lines. This necessity has emerged because traditional grid management, which relies on “load shedding” and peak-shaving, cannot accommodate the relentless appetite of AI hardware. The relevance of this technology in the broader landscape is defined by its ability to bypass the efficiencies of the past, creating a new standard for industrial energy density.

Technical Profiles of Modern Data Centers

High-Load Factor and Continuous Energy Consumption

The most striking technical feature of an AI-optimized data center is its exceptionally high-load factor. While traditional cloud storage facilities might see their power usage drop during off-peak hours, a machine learning cluster operates at near-maximum capacity twenty-four hours a day. This “always-on” nature creates a flat demand profile that offers no respite for the grid. For utility providers, this is a nightmare scenario; there are no valleys in the consumption curve to perform maintenance or balance the load, meaning the grid must be built to support peak capacity at all times.

This constant draw of energy changes the physics of the local distribution network. Continuous high-amperage flow generates persistent heat in transformers and underground cabling, accelerating the degradation of physical assets that were once expected to last for decades. By maintaining such a rigorous consumption schedule, AI data centers effectively remove the “buffer” that most municipal grids rely on to handle seasonal spikes, such as summer heat waves. This makes the implementation of AI infrastructure a zero-sum game for regional power availability.

High-Density Computing Clusters

Inside these facilities, the shift toward GPU-heavy server racks has led to localized power densities that were previously unthinkable. A single rack in a modern AI data center can now demand upwards of 100 kilowatts, which is nearly ten times the requirement of a standard enterprise server rack. This concentration of power requires specialized cooling systems and liquid-to-chip heat exchangers, as air cooling is no longer sufficient to dissipate the thermal energy generated. These technical requirements force a complete redesign of the facility’s internal electrical architecture.

The extreme density of these clusters means that a relatively small physical footprint can put as much strain on a substation as a mid-sized city. This creates a “hot spot” on the grid, where the intensity of the demand is so localized that it can cause voltage instability for surrounding neighbors. From a technical perspective, the challenge is not just the total volume of energy but the intensity of its delivery. Modern data centers are essentially industrial smelting plants disguised as office buildings, requiring a level of electrical fortification that traditional infrastructure simply does not possess.

Current Trends in Grid Modernization and Utility Planning

Industry leaders are currently pivoting toward a “tech time” deployment strategy, which attempts to bridge the gap between rapid software innovation and slow-moving utility construction. This trend is most visible in regional hubs like the Pennsylvania corridor, where developers are targeting “brownfield” sites—former industrial zones that already have heavy-duty connections to the grid. By repurposing these sites, companies can bypass some of the years-long waiting periods for new transmission lines, though this often results in a patchwork of modernized and decaying infrastructure operating side-by-side.

Moreover, there is a significant industry shift toward real-time grid monitoring and dynamic load management. Utilities are beginning to implement advanced sensors and AI-driven analytics to predict where the next bottleneck will occur. This trend toward “smart” monitoring is a direct response to the unpredictability of new data center clusters. Instead of building new power plants, which can take a decade, operators are looking for ways to squeeze more efficiency out of existing lines by identifying thermal limits in real-time, allowing for a more aggressive but risky utilization of current assets.

Real-World Applications and Regional Implementations

The deployment of AI-optimized data centers is no longer restricted to remote tech campuses; it is increasingly being integrated into urban and industrial centers to support low-latency applications. In sectors like autonomous logistics and real-time financial trading, the proximity of the data center to the end-user is critical. This has led to the development of “edge-AI” hubs in metropolitan areas, where the computing power is used to manage complex urban systems. These implementations demonstrate that the technology is moving closer to the heart of the civil infrastructure it strains.

One of the more unique use cases involves the integration of localized computing with district heating systems. In some newer regional implementations, the massive amounts of waste heat generated by GPU clusters are being captured and redirected to warm nearby commercial buildings or industrial processes. This circular energy model attempts to mitigate the environmental impact of the data center’s massive consumption. However, such projects remain the exception rather than the rule, as the primary focus for most developers remains securing raw wattage at the lowest possible cost.

Critical Challenges and Infrastructure Constraints

The most significant hurdle facing the expansion of AI infrastructure is the planning mismatch between tech developers and utility companies. While a data center can be constructed and filled with servers in less than two years, the transmission lines required to power them often take seven to ten years to permit and build. This lag has created a massive backlog in interconnection queues, where hundreds of projects sit in limbo, waiting for the grid to catch up. Regulatory hurdles regarding who pays for these upgrades—the tech company or the local ratepayer—further complicate the expansion.

Physical limitations of aging transmission lines also present a hard ceiling for growth. In many parts of the country, the wires are simply too thin to carry the necessary current without melting or causing fires. While updated interconnection protocols and new financial models, such as upfront capital requirements for developers, are being tested, they do not solve the fundamental lack of physical capacity. The trade-off is clear: without a massive, multi-billion-dollar investment in the physical grid, the digital economy will eventually hit a wall where no more “compute” can be added.

Future Outlook and Strategic Resilience

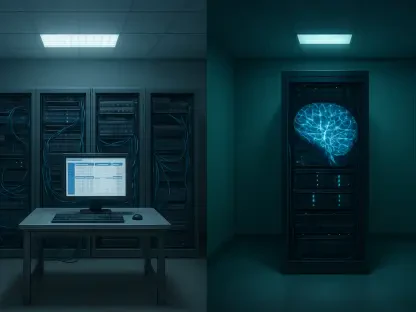

The future of this technology lies in the decoupling of data centers from the traditional centralized grid through the integration of microgrids and on-site energy storage. We are seeing a move toward facilities that house their own small modular reactors or massive battery arrays, allowing them to operate independently during times of grid stress. This self-sufficiency is becoming a strategic necessity for tech giants who cannot afford a millisecond of downtime. Breakthroughs in load-shifting algorithms may also allow some non-critical AI training to be throttled based on real-time grid health.

Looking further ahead, the long-term impact on the energy landscape will likely be a total reorganization of how we value reliability. The demand from AI will drive a massive acceleration in the deployment of renewable energy, but only if it is paired with long-duration storage. The transition toward a more decentralized, resilient energy system is inevitable, but the path there will be defined by a constant tension between the speed of innovation and the physical reality of the wires that connect our world.

Conclusion and Assessment

The intersection of AI growth and grid reliability has reached a definitive turning point where software ambitions are being checked by the limitations of copper and steel. This review demonstrated that while the technological capabilities of AI clusters are advancing at a breathtaking pace, the infrastructure supporting them remains dangerously outdated. The move toward high-density, high-load centers has stripped the grid of its traditional resiliency buffers, making every new facility a potential risk to regional stability.

To sustain the digital economy, the next logical step involves a mandatory shift toward “grid-aware” computing architectures. This means moving beyond passive consumption toward a model where data centers act as active participants in grid balancing, utilizing on-site storage and sophisticated load-shedding protocols. The verdict is clear: the era of “limitless” digital expansion is over, and the future will belong to those who can successfully integrate their massive power needs with a fragile and evolving energy ecosystem. Proactive investment is no longer an option but a requirement for survival.