Kwame Zaire is a preeminent figure in the landscape of industrial technology, renowned for his deep expertise in electronics, equipment, and production management. As a thought leader in the evolution of manufacturing, he has dedicated his career to the intersection of predictive maintenance, quality control, and workplace safety. His insights are particularly relevant as the automotive industry faces a pivotal moment, transitioning from traditional mechanical assembly to highly digitized, AI-driven environments. Zaire’s perspective offers a unique bridge between the complex technical requirements of modern machinery and the practical needs of the workforce operating them.

This discussion explores the comprehensive integration of artificial intelligence across the automotive value chain, examining how global leaders are moving beyond experimental phases into full-scale operational reality. We delve into the mechanics of collaborative data ecosystems that track sustainability, the democratization of technology through self-service AI platforms for non-technical staff, and the radical shift from physical prototypes to high-fidelity digital simulations. Furthermore, the conversation highlights the role of real-time quality monitoring and the emerging potential of humanoid robotics in creating autonomous, networked digital facilities.

How do you balance the push for immediate return on investment with the need for long-term innovation when scaling AI across development and sales? Please provide a step-by-step breakdown of how you identify which legacy processes are most ready for an AI-supported transformation.

Balancing immediate financial returns with long-term technological vision requires a strategy that treats efficiency and innovation as two sides of the same coin. We approach this by identifying business imperatives where AI can act as a force multiplier, scaling capabilities from the initial development phase all the way through production and into sales operations. To identify legacy processes ready for transformation, we first look for areas with high data density but low processing speed, such as customer communications or manual manufacturing optimizations. Second, we evaluate the potential for “generative” impact, where AI can take over repetitive specification analysis, as seen in our current engineering workflows. Finally, we prioritize “series production” use cases where we can implement hundreds of small AI interventions that collectively drive a massive ROI by reducing waste and accelerating the path to market.

Moving toward transparent data ecosystems allows for precise tracking of carbon footprints from raw materials to finished components. What specific metrics do you use to measure the success of these collaborative networks, and how do you manage the inherent risks of sharing sensitive data between partners?

The success of a collaborative network like Catena-X is measured by its ability to provide a granular, end-to-end view of the product’s journey, such as the modeling of the complete CO2 data chain for the BMW iX kidney grille. We look specifically at metrics regarding resource utilization efficiency and the speed at which we can identify early risks within the supply chain. Managing the risks of data sharing involves moving toward an open but highly regulated digital collaboration framework that ensures compliance while fostering transparency. This visibility is vital because it allows every partner in the value chain to meet stringent regulatory requirements without compromising their proprietary manufacturing secrets. By focusing on shared sustainability goals and resilience, we turn data sharing from a perceived liability into a strategic advantage for everyone involved.

Empowering non-technical employees to build their own AI solutions through self-service platforms shifts the traditional corporate hierarchy. How does the training process work for these workers, and what anecdotes can you share about a grassroots AI tool that significantly improved a daily manufacturing task?

The training process is designed to be immersive and hands-on, centered around our AI Lab where employees can touch and feel the technology while exploring its potential. We provide a generative AI self-service platform and an AI Assistant that acts as a bridge, allowing someone who has never written code to build a custom solution for their specific workstation. One of the most impressive examples involves workers using these tools to analyze mountains of technical standards and documentation that used to take days to sift through manually. By automating the extraction of these specifications, the team at the Landshut facility has been able to focus on high-level quality inspections and precision engineering rather than paperwork. This shift creates a culture of digital literacy where the “innovator” isn’t just an engineer in an office, but the technician on the factory floor who sees a problem and builds the solution themselves.

Shifting from physical prototypes to AI-driven simulations for crash testing and aerodynamics fundamentally changes the engineering timeline. What are the main technical hurdles in ensuring these simulations are as accurate as physical tests, and how has this transition affected your overall product development cycles?

The primary technical hurdle lies in the sheer complexity of the data required to replicate the physical world, particularly when simulating autonomous driving scenarios or high-speed aerodynamic drag. To match the accuracy of a physical test, our AI models must process thousands of variables simultaneously, ranging from material deformation in a crash to the subtle behavior of airflow over a vehicle’s body. This transition has dramatically compressed our development cycles by reducing our historical dependence on building and then destroying expensive physical prototypes. We can now iterate through dozens of designs in the time it once took to build a single clay model or test mule. This shift doesn’t just save money; it allows for more comprehensive testing scenarios that would be impossible or too dangerous to conduct in the physical world, ultimately leading to a safer and more efficient vehicle.

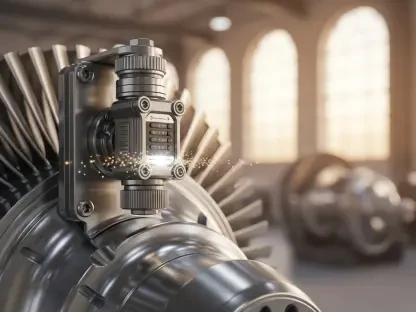

Integrating real-time quality platforms for fault detection alongside humanoid robots represents a significant move toward autonomous assembly. How do you coordinate these various systems to prevent production bottlenecks, and what specific improvements in defect rates have you observed since implementing real-time sensor analysis?

Coordination is handled through our AIQX platform, which serves as a constant, watchful eye over the entire production line. By performing real-time analysis of sensor and image data, the system can detect a defect the millisecond it occurs, allowing for immediate elimination before the part moves further down the line. We prevent bottlenecks by ensuring these AI systems communicate directly with our logistics and assembly units, including the humanoid robots we are currently researching for complex tasks. Since implementing this real-time monitoring, we have seen a significant reduction in defect rates because the system eliminates the “lag time” between a fault occurring and a human inspector finding it. This creates a smooth, continuous flow where quality is baked into the process rather than being a final checkpoint that might halt production.

Smart transport systems and networked digital facilities require a high level of logistical precision. Could you elaborate on the practical challenges of retrofitting older manufacturing plants with these advanced AI capabilities and the specific steps taken to ensure a seamless transition without halting current production?

Retrofitting an established plant to meet the “iFACTORY” standard is a monumental task because you are essentially installing a digital nervous system into a physical body that wasn’t originally designed for it. The practical challenge is maintaining series production—which involves hundreds of active use cases—while upgrading the underlying infrastructure to support smart transport and networked sensors. We manage this through a phased rollout, starting with logistics optimization where smart transport systems are introduced to navigate the existing floor plan more efficiently. We then layer in the digital networking, facility by facility, ensuring that every new sensor or AI tool is compatible with the legacy hardware already in place. It’s a meticulous process of “plug-and-play” integration that relies on highly precise planning to ensure that the assembly lines never stop moving while the brain of the factory is being upgraded.

What is your forecast for the role of physical AI in automotive manufacturing over the next decade?

Over the next ten years, I predict that the distinction between “digital AI” and “physical manufacturing” will entirely disappear, resulting in a landscape where every single process is AI-supported by default. We will see humanoid robots move from research labs to permanent fixtures on the assembly line, working alongside humans to perform tasks that require both extreme precision and adaptability. The data ecosystems we are building today will evolve into a global, transparent web where every car’s carbon footprint and material history are instantly accessible and verifiable. Ultimately, the factory of the future will be a self-healing, self-optimizing organism that uses real-time intelligence to respond to global supply shifts and customer demands in seconds rather than months. For those entering the field, my advice is to embrace this hybrid reality: the most valuable skill of the next decade will be the ability to speak the language of both the machine and the algorithm.