The massive computational power required to train contemporary Large Language Models has pushed the thermal output of modern data centers to a point where traditional air conditioning is no longer a viable solution for maintaining operational stability. As chip designers push the boundaries of transistor density and power consumption, the heat flux generated by high-performance GPU clusters now regularly exceeds the heat dissipation capacity of conventional fans and heat sinks. This evolution has necessitated a fundamental shift in how engineers approach data center architecture, moving away from simple airflow management toward sophisticated direct-to-chip liquid cooling solutions. By circulating specialized coolant directly across the most heat-intensive components, operators can achieve significantly higher power densities without the risk of thermal throttling or hardware failure. This transition represents more than a minor upgrade; it is a critical re-engineering of the physical backbone that supports the global scaling of artificial intelligence.

The Physical Limitations of Air Cooling in Modern Data Centers

Efficiency in data center cooling has historically relied on the movement of massive volumes of air, yet this method has encountered a physical wall as chip power consumption climbs toward the kilowatt range. In 2026, the density of server racks has increased to the point where air-cooled systems simply cannot move enough molecules fast enough to carry away the heat generated by dense GPU arrays. This phenomenon leads to significant energy waste, as cooling fans must spin at extreme speeds, consuming a substantial percentage of the total facility power. Furthermore, the reliance on ambient air introduces vulnerabilities to external environmental factors, such as humidity and particulate matter, which can affect long-term hardware reliability. To maintain the pace of innovation, the industry has recognized that liquid is nearly twenty-five times more efficient at transferring heat than air, making it the only logical medium for the next generation of high-performance computing clusters and massive AI training farms.

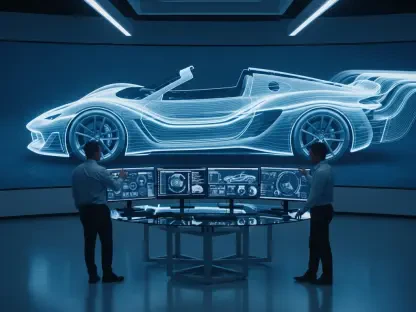

Moving from air to liquid cooling is not merely about changing the medium; it requires an entirely different approach to the physical layout of the server room. Direct-to-chip cooling involves routing thin pipes of liquid through a cold plate mounted directly onto the processors, capturing heat at the source before it can radiate into the surrounding environment. This method eliminates the need for massive air conditioning units and high-velocity floor fans, allowing data centers to operate with much tighter rack spacing and significantly lower noise levels. Moreover, the heat captured by the liquid can be repurposed for district heating or industrial processes, turning a waste product into a valuable resource. As organizations scale their AI capabilities from 2026 to 2028, the adoption of these closed-loop systems will likely become the standard for any facility housing high-density workloads. This shift effectively decouples the performance of the silicon from the limitations of the atmosphere surrounding it.

Precision Manufacturing and Component Reliability in Cooling Systems

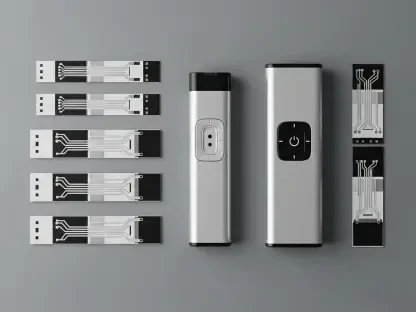

The move to liquid-cooled infrastructure introduces new risks, most notably the potential for leaks that could destroy millions of dollars in sensitive computing hardware. Consequently, the industry has turned to specialized manufacturers like Rapidaccu to provide the precision-engineered components necessary to ensure system integrity. These components, such as high-tolerance liquid cooling connectors and quick-disconnect couplings, must be manufactured to exacting standards to maintain a hermetic seal under high pressure. Rapidaccu utilizes multi-axis CNC machining to create parts that can be repeatedly connected and disconnected without any loss of fluid, a requirement for the modular maintenance of modern server blades. When the cost of downtime is measured in thousands of dollars per second, the mechanical reliability of a single connector becomes just as critical as the logic gates on a processor. Precision manufacturing has thus emerged as a stabilizing force within the broader AI supply chain.

Beyond the connectors, the design of the heat sinks themselves has undergone a radical transformation to maximize thermal transfer within increasingly compact spaces. Advanced manufacturing techniques such as skiving and precision machining allow for the creation of heat sinks with incredibly thin fins and high surface area, which are essential for pulling heat away from high-wattage chips. Companies like Rapidaccu bridge the gap between initial thermal simulation and physical deployment by offering rapid prototyping services that allow engineers to test and refine these designs in real-world conditions. This iterative process is vital for the rollout of next-generation hardware, as it ensures that the physical infrastructure can keep pace with the rapid cycle of chipset innovation. By focusing on the intersection of material science and mechanical engineering, these manufacturing partners provide the essential physical foundation that allows AI developers to push their software to the absolute limit of what is currently possible.

Strategic Implementation: Moving Toward Scalable Thermal Management

Integrating advanced liquid cooling into a global infrastructure strategy requires a shift in perspective from viewing cooling as a utility to seeing it as a strategic asset. Organizations that invest in high-precision thermal management systems can achieve a significantly lower Power Usage Effectiveness rating, which directly translates to reduced operational costs and a smaller environmental footprint. The transition is also driving a shift in the labor market, as data center technicians must now be skilled in fluid dynamics and mechanical assembly in addition to traditional network administration. As the demand for AI processing continues to surge, the ability to rapidly deploy and maintain liquid-cooled clusters will be a primary differentiator for service providers. This necessitates a robust partnership between hardware designers and component manufacturers to ensure that the supply chain can deliver reliable, standardized parts at a scale that matches the explosive growth of the AI market across the globe.

The development of AI infrastructure evolved into a complex balance between computational power and mechanical resilience. Industry leaders recognized that the success of Large Language Models depended heavily on the physical environment where the chips operated. They implemented liquid cooling not just as a fix for heat, but as a comprehensive solution for increasing density and reducing energy consumption. Specialized manufacturers proved to be essential in this transition by providing the high-precision components that prevented catastrophic failures in the field. To prepare for the next phase of growth, stakeholders should prioritize the standardization of cooling interfaces and invest in long-term partnerships with reliable mechanical suppliers. By focusing on the durability and precision of the cooling backbone, organizations ensured that their hardware remained operational even as power demands reached unprecedented levels. This strategic focus on the physical layer ultimately enabled the continuous expansion of artificial intelligence.