Kwame Zaire has spent decades navigating the volatile waters of semiconductor manufacturing, witnessing firsthand the shift from simple silicon to the complex, high-stakes world of AI-driven hardware. As an expert in electronics and production management, he understands the delicate balance between the massive cooling systems of hyperscale data centers and the thermal efficiency required for the smartphone in a user’s hand. In this conversation, we explore how the sudden surge in AI demand is rewriting the rules of the global supply chain, forcing a reckoning for consumer electronics and exposing the vulnerabilities of smaller industries that rely on the same fundamental building blocks. We dive into the specific operational shifts at firms like Micron, the logistical growing pains of moving production to India and Vietnam, and the long-term implications of a market dominated by a handful of key players.

High-bandwidth memory for AI is currently drawing massive capital away from standard consumer chip production. How are manufacturers balancing these competing needs, and what specific operational steps can they take to prevent consumer device shortages while chasing high-margin data center contracts?

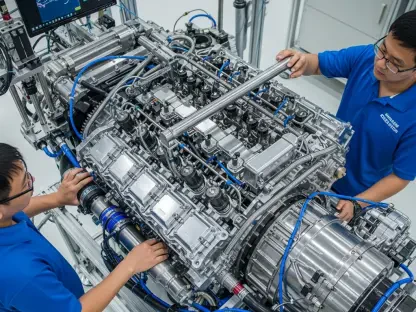

The tension in the cleanrooms right now is palpable because manufacturers are essentially being asked to choose between two different futures. On one hand, you have the steady, high-volume demand of consumer electronics, and on the other, the explosive, high-margin allure of AI data centers. Because high-bandwidth memory (HBM) and standard DRAM often compete for the same wafer capacity, companies like Micron have had to be surgical with their capital allocation. In 2023, we saw Micron significantly cut capital spending and run their fabs below peak levels to avoid a glut, but by 2024 and 2025, the narrative shifted entirely toward AI-driven tightness. Operationally, manufacturers are focusing on technology upgrades rather than just building more floor space, which means they are trying to squeeze more value out of every square inch of their existing facilities. To prevent a total consumer shortage, they are keeping their older fabrication plants running at or near capacity for legacy components while reserving the newest, most advanced lines for the lucrative AI contracts.

The semiconductor industry is increasingly concentrated among a few key designers, foundries, and equipment makers. Given the high fixed costs and long development cycles, what are the primary risks of this consolidation for device makers, and how can they secure priority supply during sudden demand spikes?

We are living in an era of a “layered oligopoly,” where a handful of giants hold the keys to the entire global economy. When you look at the numbers, it’s staggering: NVIDIA holds an 85% market share in graphics processor chips, and they are beholden to TSMC, which controls over 70% of the advanced foundry market. This concentration creates a massive bottleneck because if one piece of equipment from a monopoly supplier like ASML fails or is delayed, the ripple effect is felt globally. For a device maker like Apple or Xiaomi, the primary risk is being “priced out” or pushed to the back of the line by hyperscalers like Microsoft or Google who have deeper pockets. To secure priority, these consumer giants are increasingly designing their own custom silicon to ensure system-level differentiation, but they still have to beg for space on the same manufacturing lines. The only real way to secure supply during a spike is through long-term, multi-year prepayments and deepening “sticky” relationships with the three dominant memory players—Samsung, Micron, and SK Hynix.

Running small language models on-device requires tighter integration of compute power and local memory. What are the biggest hardware hurdles when redesigning laptops or phones for these features, and how do these requirements impact the manufacturing costs and release cycles for next-generation hardware?

The transition to “on-device AI” is not just a software update; it is a fundamental architectural reimagining of the device. The biggest hurdle is the thermal and power envelope; unlike a data center that can use massive cooling infrastructure, a phone has to manage intense compute tasks in a pocket-sized frame. To run these small language models effectively, engineers have to integrate processing power with fast local memory and high-capacity storage on a single system-on-a-chip. This requirement is already pushing older hardware into obsolescence, as seen with early rollouts of features like Apple Intelligence that simply won’t run on previous generations. For manufacturers, this means higher input costs for specialized NAND and DRAM, which inevitably leads to more expensive retail prices. We are also likely to see slightly longer release cycles or “staggered” launches as firms struggle to source the high-end components needed to make these AI features feel snappy rather than sluggish.

Shifting assembly lines to regions like India or Vietnam often results in a 5% to 10% cost increase compared to established ecosystems. What are the hidden logistical challenges in these newer hubs, and how should companies manage the pricing pressure caused by tariffs and tightening export controls?

While the move to India and Vietnam is a strategic necessity to avoid the heavy hand of tariffs and geopolitical tension, it is far from a “plug-and-play” solution. The 5% to 8% cost increase, which can often hit 10% in more complex scenarios, stems from the lack of a mature “supplier ecosystem” that China spent forty years building. In Vietnam or India, a manufacturer might find that while the assembly line is ready, the specialized screws, adhesives, or sub-components still have to be imported, adding lead time and freight costs. There is a sensory chaos to these transitions—new customs procedures, developing power grids, and the frantic training of a new workforce. To manage the pricing pressure, companies are forced to either accept thinner margins or pass the costs to the consumer, which is why we’re seeing a consolidation of supply chains among only the largest players who can absorb these shocks. They are also forced to navigate tightening export controls on critical minerals, which adds another layer of paperwork and unpredictability to every shipment.

Sectors like medical technology represent less than 1% of the chip market, leaving them with very little purchasing leverage. What specific strategies can low-volume, high-criticality industries use to maintain their supply chains when hyperscale data center buyers dominate the priority lists for high-tech components?

It is a terrifying reality that the chips needed for a life-saving ventilator or a diagnostic MRI machine are competing for the same silicon as a chatbot. Because medical tech accounts for less than 1% of the market, they are often the first to be deprioritized when a “whale” like Amazon or Meta places a massive order. To survive, these low-volume industries must move away from “just-in-time” inventory and toward “just-in-case” stockpiling, which is expensive but necessary for survival. They can also focus on using more mature, “trailing-edge” nodes that are less attractive to the AI giants, ensuring a more stable, albeit less cutting-edge, supply. Another strategy is to form purchasing consortiums with other small-volume industries to create an aggregate demand that is harder for foundries to ignore. Ultimately, these companies have to build deep, transparent relationships with distributors to get an early warning when the “AI weather” is about to turn stormy.

Massive energy consumption by data centers is driving a surge in demand for power delivery and cooling infrastructure. How is this infrastructure boom impacting the availability of electrical components for other industries, and what are the long-term trade-offs for regional power grids facing this strain?

The sheer scale of energy consumption is hard to wrap your head around; data centers consumed roughly 415 TWh of electricity in 2024 alone. This massive hunger for power is creating a secondary shortage in electrical components like transformers, high-end power management chips, and specialized cooling pumps. For other industries, like automotive or heavy manufacturing, this means longer wait times for basic electrical infrastructure because the “hyperscale” buyers are sweeping up the supply. Long-term, we are looking at a serious strain on regional power grids that were never designed to handle the localized intensity of an AI server farm. The trade-off is often a choice between digital growth and local stability; as these data centers expand, they drive up the cost of electricity for everyone else and force a rapid, expensive acceleration in grid modernization. It’s a sensory overload for grid operators who are trying to balance the needs of a residential city with a facility that has the power profile of a small country.

Memory chipmakers have historically faced severe boom-and-bust cycles, leading to a hesitation to add new capacity. How does this cautious approach to capital investment affect the speed at which the market can respond to AI growth, and what metrics indicate we are reaching a supply ceiling?

The memory industry is haunted by the ghosts of past gluts, specifically the crashes of 2000, 2007, and the more recent 2022-23 downturn. This trauma has created a culture of extreme caution; manufacturers would rather lose out on a few sales than be stuck with a multi-billion dollar “fab” that is sitting idle. This hesitation acts as a massive brake on the AI revolution because you cannot simply “turn on” more capacity; it takes years of planning and billions in precision equipment to bring a new plant online. We know we are reaching a supply ceiling when we see “utilization rates” hitting the high 90s across the board and lead times for basic components stretching from weeks into months. Another key metric is the rapid pivot of 2026 spending toward technology upgrades—like moving from one generation of HBM to the next—rather than building new physical footprints. This suggests that the industry is trying to innovate its way out of the shortage rather than building its way out, which keeps supply permanently tight.

What is your forecast for the consumer electronics market over the next three years?

For the average consumer, the next three years will likely be defined by “sticker shock” and a noticeable slowdown in the pace of hardware innovation for entry-level devices. As data centers continue to swallow up the high-end memory and processing capacity, I expect we will see a widening gap between premium “AI-ready” devices and budget hardware that feels increasingly obsolete. Prices will likely remain elevated as manufacturers try to recoup the 5% to 10% cost increases from relocating their supply chains and the higher premiums they are paying for silicon. We will also likely see more “paper launches,” where a product is announced but isn’t actually available in meaningful quantities for months due to component shortages. The silver lining is that this pressure will force a new era of energy-efficient design, as companies strive to make on-device AI work within the strict power limits of a handheld battery. Ultimately, it’s a period of transition where the “consumer” is no longer the primary driver of the chip market, and we will all have to adjust to a world where our phones are competing with the cloud for the very heart of their technology.