Across crowded aisles and humming demo cells in Hannover, a quiet threshold shifted as factory AI stopped promising and started delivering under real constraints, with robots, agentic software, and hardened infrastructure combining to meet production targets instead of pilots. The showcase was not another choreography of lab tricks; it centered on throughput, uptime, and governance. European manufacturers set a clear bar: match human benchmarks on repetitive tasks, cut engineering cycles without eroding safety, and keep industrial data under sovereign control. The standout signals aligned on three fronts. Physical AI on wheels and arms executed logistics and handling with parity-level performance. Agentic co-pilots compressed design, commissioning, and troubleshooting work. And a layered IT/OT stack—spanning digital twins to virtualized control—carried loads at the edge while synchronizing with the cloud. The net effect pointed to a pattern that is repeatable, portable, and, crucially, defensible.

From Pilots to Production on the Factory Floor

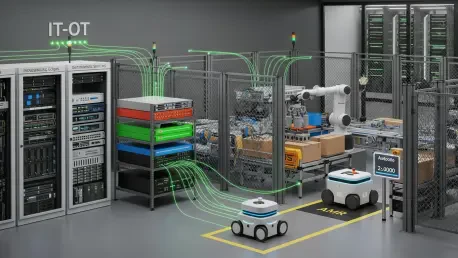

The clearest proof point came from a live deployment at Siemens’ Erlangen plant, where Siemens, NVIDIA, and Humanoid ran the wheeled HMND 01 humanoid on autonomous logistics. The robot moved 60 totes per hour—on par with human throughput—navigating aisle traffic, handoffs, and charging windows without manual orchestration. The capability did not arrive by trial-and-error on the shop floor. A simulation-first workflow drove planning and validation, binding a high-fidelity digital twin to real-time policies and sensor stacks. Through Siemens Xcelerator, teams exercised tasks virtually, tuned routes, and hardened error recovery before wheels touched concrete. Edge inference closed the loop on latency-critical decisions, while fleet coordination synchronized with higher-level schedulers. The result was not just speed; it was repeatability, enabling the same skills to be redeployed across lines and sites with minimal rework.

Building on this foundation, the shift from advisory AI to autonomous action came into focus. Instead of nudging operators with pick lists and prompts, systems executed tasks and adapted to disturbances—rerouting around blocked lanes, reslotting totes, and updating priorities in response to production signals. That autonomy rested on modular, software-defined automation rather than brittle point integrations. Digital twins simulated disturbances and rare events, allowing policies to learn safe boundaries before encountering them live. Open interfaces reduced vendor lock-in, letting planners swap mapping, perception, or scheduling components without refactoring the stack. The upshot was a pragmatic balance: robots handled constrained, high-volume work cells, while humans supervised exceptions and higher-mix changeovers. In aggregate, this division of labor raised overall equipment effectiveness without inflating oversight burdens.

The Playbook for Scalable AI: Software, Infrastructure, and Data Control

Agentic software showed similar maturation on the engineering side. Schneider Electric’s Industrial Copilot, built on Microsoft Azure AI, targeted PLC programming, HMI logic, and maintenance workflows with a tight loop from query to validated change. Reported impacts were concrete rather than aspirational: up to 50% savings in engineering time, as well as more robust handover from design to operations. Early adopter ##e POWER cited 6,000 hours of stable autonomous operation, a 10% cut in levelized hydrogen cost, and about €500,000 in estimated savings. Those figures mattered because they flowed from bounded scopes, auditable suggestions, and integration with enterprise systems. Prompted code was checked against standards, test benches ran in simulation, and approvals carried traceability back to requirements. The lesson was not that a copilot replaces engineers, but that it shortens iterations, exposes edge cases earlier, and reduces late-stage rework.

Infrastructure determined who could scale and who stayed in pilot purgatory. Many plants lacked the compute density, deterministic networking, and platform services necessary to run AI at the edge under production SLAs. Schneider’s partnerships with Dell and AWS illustrated a staged route from design to runtime: ProLeiT provided OT groundwork; AVEVA and NVIDIA Omniverse powered digital twin modeling; AWS IoT Greengrass hosted edge services; and virtualized control on Amazon EC2 extended orchestration without displacing on-prem control loops. This hybrid pattern balanced latency and reliability locally while centralizing heavy simulation and lifecycle data. Data sovereignty remained non-negotiable. Schwarz Digits pressed secure integration of cloud, AI, and IT services as a competitiveness lever, aligning identity, encryption, and governance so sensitive industrial data stayed under policy. Open, modular stacks completed the picture—ensuring intelligence could be moved, duplicated, and audited across sites without new lock-in.

What Executives Needed to Do Next

This moment favored decision-makers who translated demos into roadmaps with guardrails. The immediate steps were actionable: formalize a simulation-first process tying digital twins to safety cases and change control; ring-fence candidate workloads where autonomy can match human throughput; and stand up a minimum viable edge platform that runs inference, logging, and model updates under IT-grade observability. On the engineering side, embed an agentic copilot in the toolchain with automated test benches and policy checks, then baseline cycle-time and quality metrics so claimed gains can be verified. Procurement should push for open interfaces across mapping, perception, scheduling, and control to future-proof investments, and security teams should anchor data sovereignty in identity, key management, and classification aligned to regulatory zones.

Hannover had already marked a pivot from experimentation to execution, and the contours of a durable playbook were visible. Physical AI met human benchmarks for materials handling when scoped tightly and validated in simulation; agentic software returned measurable time and cost savings when chained to testing and governance; and hybrid edge-to-cloud infrastructure carried AI workloads without surrendering control of industrial data. The organizations that moved fastest selected a lighthouse cell, codified a twin-driven deployment process, and built a modular stack they could replicate line by line. The outcome suggested a shift in emphasis: success depended less on novel algorithms and more on disciplined systems engineering, edge orchestration, and data stewardship. Factories were no longer asking whether AI worked; they were standardizing how to deploy it at scale.